"hooking existing programs together [.]each new lvl of integration leaving us handcuffed to a new[.] representation" http://t.co/Dlnzk8tI3k

miniblog.

Related Posts

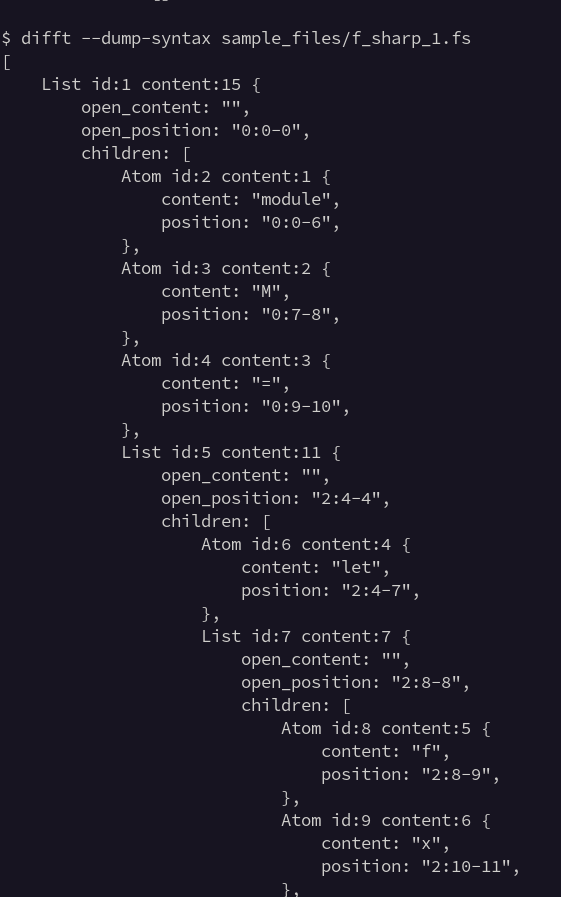

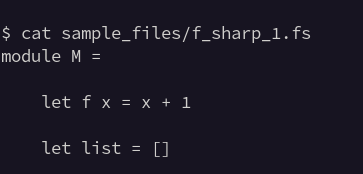

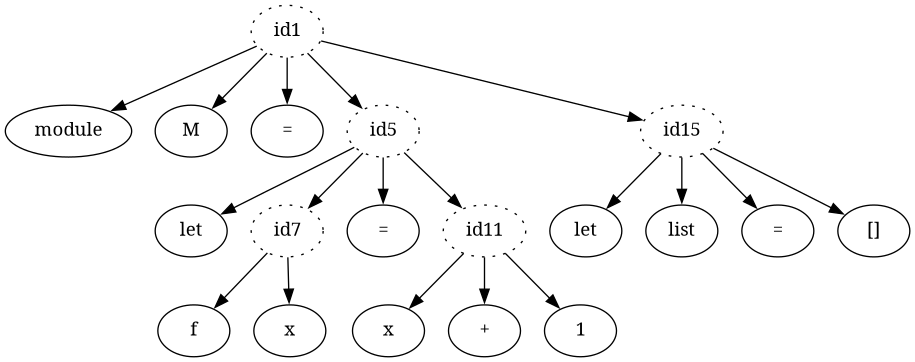

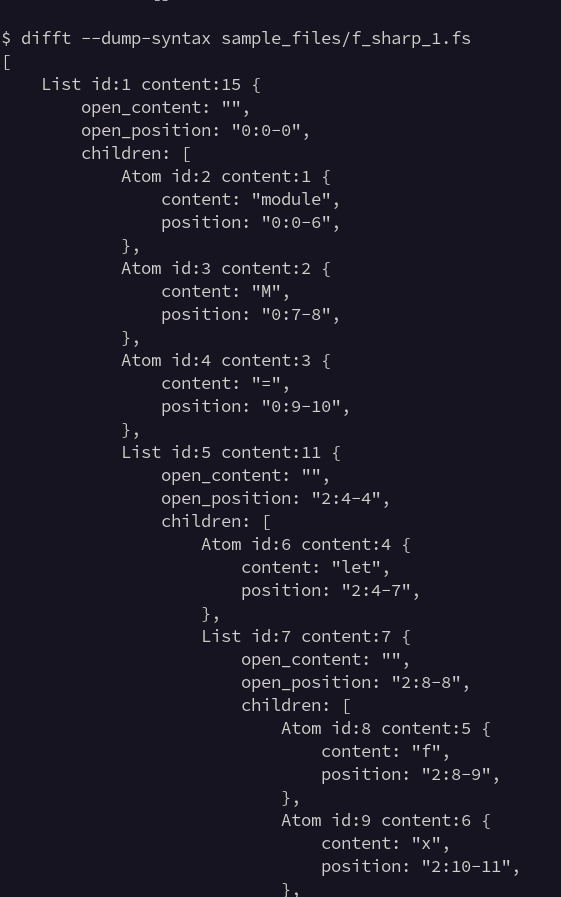

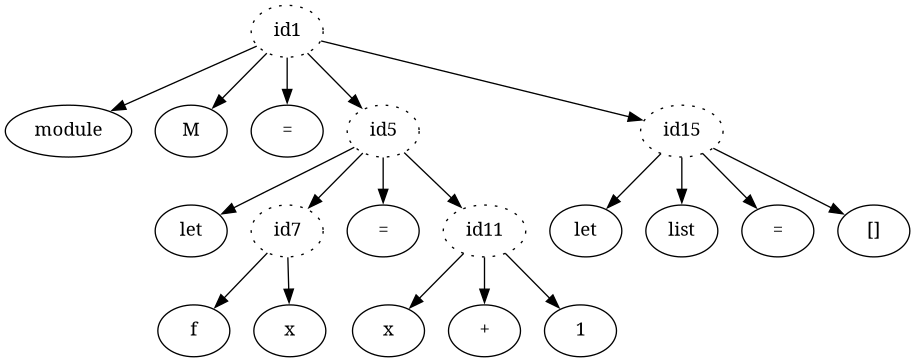

I'm playing with DOT output for debugging syntax trees from difftastic. Here's an F# snippet, the Debug representation, and the DOT rendered as an image.

I'm pleased with the information density on the graphic, but we'll see how often I end up using it.

Noodling with an interpreter for a statically typed language with reified types (e.g. a list knows what type it contains).

Currently I have a single representation of types in both the runtime and the type checker. I think that's a good thing?

Effects and code as a database in Unison: https://jaredforsyth.com/posts/whats-cool-about-unison/

Unison is looking at changing their program representation to plain sqlite!